It’s only been four years, but I’m itching to write another blog post. This one won’t be long – I just want to spend a bit of time talking about how I got voice control working for a recently (re-)released video game called Phoenix Wright: Ace Attorney Trilogy. Here’s a video that will mean nothing to you if you didn’t understand any of those words (yet):

Phoenix Who?

A bit of history for the uninitiated. The Ace Attorney game series is a long-running staple of developer Capcom, focused around the trials and tribulations (hehe) of colourful, fictional lawyers. The first three games in series revolve around Phoenix Wright, a junior lawyer at the Fey & Co. law firm. Released on the Game Boy Advance, gameplay consisted of alternating sequences of gathering evidence and fighting cases in court.

Ace Attorney is actually a disguised example of the visual novel genre: the game does not involve platforming, simulation, shooting, or RPG elements. Instead, the game is played by reading through many, many text boxes, punctuated with player input in the form of finding clues, choosing dialogue responses, and working out which piece of evidence to present or which piece of testimony to question. It’s been quite successful and has spawned 10 games so far, with lead character Phoenix Wright appearing as a playable character in the similarly long-running Marvel vs. Capcom fighting game series.

Objection!

But what has voice control got to do with it? Well, when Phoenix Wright was first re-released on the Nintendo DS, Capcom was keen on capitalising on the DS’ unique functionalities: in addition to spreading the game out across both screens, it also took advantage of the bottom touch screen and (here we go) the microphone built-in to the device. Usage of the last item was purely optional, but if a player wanted to feel more like legendary lawyer Phoenix Wright, they could hold a button to activate the microphone and say (or shout, if they were doing it properly!) one of the three key-phrases recognised by the game: “Objection!”, “Take That!”, and “Hold It!”

When used at the appropriate time, this triggered the corresponding action in the game. “Objection!” would present evidence that contradicts a witness’ statement, “Take That!” would do the same in support of your own statements, and “Hold It!” is used to press a witness for more information on one of their statements.

Of course, each of these actions mapped to a button on keypad as well, and it was always faster to press the button. But where’s the fun in that?! Unfortunately, it seems that Capcom decided to move away from the microphone gimmick in all subsequent re-releases, likely because most of the consoles it was released on did not have native microphone support without the purchase of a peripheral.

This includes the latest round of re-releases on Switch, XBox One, PlayStation 4 and PC. But that last one – the PC… Perhaps there’s some hope? Perhaps some nostalgic software engineer with too much free time will come to the rescue and develop the hackiest hack that has ever hacked? Will enterprising digital lawyers be able to scream ‘Objection!’ at the top of their lungs once more?

The Script

Of course they will. Armed with the knowledge you now have, you can finally appreciate the technological marvel at the top of the page. But how does it work? In fact, it was very simple. With the current state of software, it’s easier than ever to cobble together simple scripts that leverage existing libraries to great effect. The Objection! Python script is the combination of two libraries – one that manages detecting the key-phrases spoken into a microphone, and another that sends key presses to the game. The former is generally called a ‘wake word’ engine, and the latter is known as GUI automation. The total code in the script doesn’t even hit a hundred lines, and if you threw a competent programmer at it, you could probably halve it to fifty or so. The script runs in the background while the user plays the game, waiting for them to activate it through a wake word.

Wake Words

If you own an Amazon Echo, you already know what a wake word is. The purpose of a wake word is to tell a computer system that you’re about to address it. For instance, the default wake word for an Echo is ‘Alexa’. For Android phones, it’s ‘OK Google’. For an iPhone, it’s ‘Siri’. The words are carefully chosen as to not come up in natural speech (unless you happen to know a Siri, or an Alexa. Hopefully you don’t know anyone named ‘OK Google’, but the future is still young…).

Once a wake word is spoken, the device will then listen for additional input and then send it off to the cloud to be processed. But the crucial difference between the input that follows a wake word and a wake word itself is that wake words can be processed without an internet connection. This serves multiple purposes: one, it protects your privacy. The device is always listening, but only for the wake word. Only after the wake word is spoken will you be recorded. Two, it saves an incredible amount of money in processing power and bandwidth. Imagine everything you said had to be uploaded to the cloud for processing! The costs would be enormous, and the strain on the cloud would be immense.

For my script, it was not necessary to capture additional input after the wake words. It was enough that the wake words could be detected and the window automation triggered. Several wake word engines exist for non-commercial use. I investigated three: PocketSphinx, Snowboy, and Porcupine. Of the three, I ended up using Porcupine. PocketSphinx isn’t so much wake word-oriented as it is towards general speech processing, which is cool, but on review ultimately seemed a bit complicated to integrate for the use case. Snowboy seemed very promising, but isn’t supported for Windows. This left Porcupine, which in my experience has been extremely simple to use. The only downside is that, even for non-commercial use, Porcupine’s wake word definition files expire after 90 days and must be regenerated with their closed-source tool. Fortunately, I intend to finish the game within that time-frame..! If I were to revisit the project, I would take a longer look at PocketSphinx.

Here’s a snippet of how I use Porcupine (heavily stolen influenced by their demo code):

def run():

try:

while True: # Forever...

pcm = audio_stream.read(porcupine.frame_length, exception_on_overflow=False) # Read from the microphone

pcm = struct.unpack_from("h" * porcupine.frame_length, pcm) # Put it into a format Porcupine understands

result = porcupine.process(pcm) # Feed Porcupine the audio data, and trigger an action if one is detected

if result == OBJECTION:

print("OBJECTION!")

objection()

if result == TAKE_THAT:

print("TAKE THAT!")

takethat()

if result == HOLD_IT:

print("HOLD IT!")

holdit()

GUI Automation

So we have a method of triggering an action in the script, but what actions do we trigger? There are multiple ways of triggering actions in an application that you don’t have API access to. One way is to disassemble the program, locate the hooks used for triggering subroutines, and inject calls to the application to run those subroutines. This is complicated and sounds like something out of ‘The Matrix’. An easier method is to use GUI automation.

GUI automation is a very fancy way of saying that we want a piece of software to pretend to be a human. It does this by generating button or keyboard presses in the same way a person would. For example, I could use GUI automation to keep my computer awake by automatically sending the space bar key to the operating system every minute. That’s not a good use case, but perhaps this gif will be more illuminating:

Much clearer. So what does this have to do with yelling “Objection!”? The answer is: Everything.

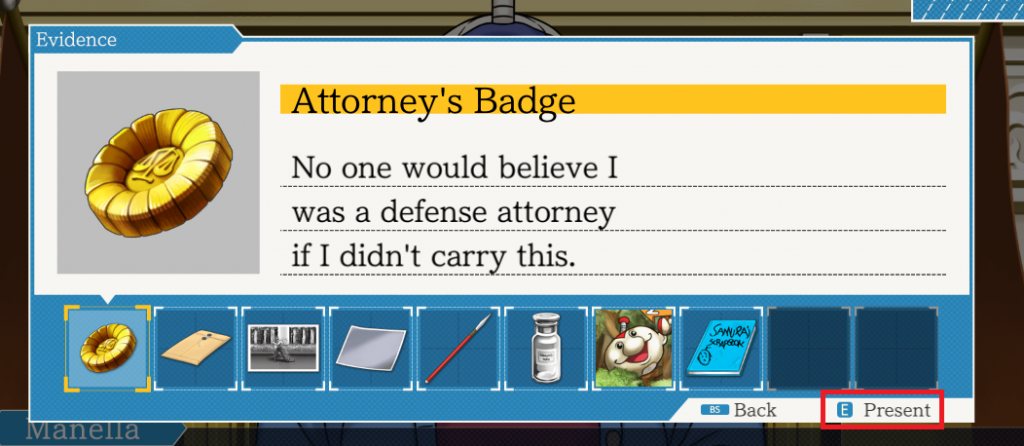

In the above screenshot, I’ve highlighted the GUI element that tells you how to object(!). You have two options: One is to click the button with your mouse. The other is to press the ‘E’ key on your keyboard. If only we had some sort of way to do either of those things through a script…

We do! We have the power of GUI automation on our side. By using the pywinauto library, we can write a couple of lines of code to send the ‘E’ key to the running game. In order to truly emulate the button press, we have to both mimic the act of pushing the key down, and then releasing the key.

def connectPhoenixWindow():

app = pywinauto.application.Application()

app.connect(title_re="Phoenix Wright: Ace Attorney Trilogy")

return app

def objection():

app.top_window().type_keys("{e down}")

app.top_window().type_keys("{e up}")

def takethat():

app.top_window().type_keys("{e down}")

app.top_window().type_keys("{e up}")

def holdit():

app.top_window().type_keys("{q down}")

app.top_window().type_keys("{q up}")

Do those function names look familiar? Yes, those are the same functions that are called when a wake word is detected! It couldn’t be any easier. Wake word engine detects the phrase, GUI automation triggers the button press.

And..?

And what? That’s it! That’s all it takes to get voice control back into Phoenix Wright. The script itself sits quietly in the background while the game is running, detects the phrases and sends the keys to the game, mimicking the action of the player doing it themselves. If you want to see the complete code (or even download some pre-compiled binaries), take a look at the Github repository. Perhaps the simplicity of it all will inspire you to find other (more worthwhile) ways to use voice as a way to automate otherwise manual tasks. That’s all folks, hope you enjoyed!